Soul in the Machine

Some thoughts on making personalities come to life with AI.

[Adapted from an Ignite AI talk @ Betaworks, August 2024]

I spent a lot of my work time in the past five years trying to make funny, touching, human-feeling personalities come to life with AI. And today I want to share a few things that worked.

I know we’re in a room right now that’s AI-friendly — but to a lot of people, AI just sounds evil. Even the word “artificial” is something we don’t want in any of our products anymore.

In pop culture, AI is unknowable, alien, murderous, and indifferent to us. At worst, it’s Skynet and its legions of Terminators, or the agents in the Matrix. At best, it’s the relationship in Her — and not to spoil a ten year-old movie, but that doesn’t work out so well.

But we’ve also been imagining lovable, quirky, beneficial robots for decades — companions like R2-D2, or KITT from Knight Rider. As friend Cameron Marlow recently put it:

So there are at least 3 AI wearables trying to make the movie Her a reality. Why aren’t there any AI companies bringing KITT to life? I am ready to have a relationship with my car.

I’ll take Cameron’s thought a step further. I’m much more excited about a future where I’m surrounded not by creepy, near-human androids, but by living, interactive cartoons.

I’ve been an exec at places like YouTube and Duolingo — companies crammed to the gills with machine learning, which we used to entertain and engage people worldwide at a scale that was previously impossible. When our team at Duolingo first started working with generative voices and LLMs, they had a lot more limitations. Making anything like live-action video or long-form stories was nowhere in sight, so it forced us to keep things simple and cartoony. Which, in retrospect, felt like genius.

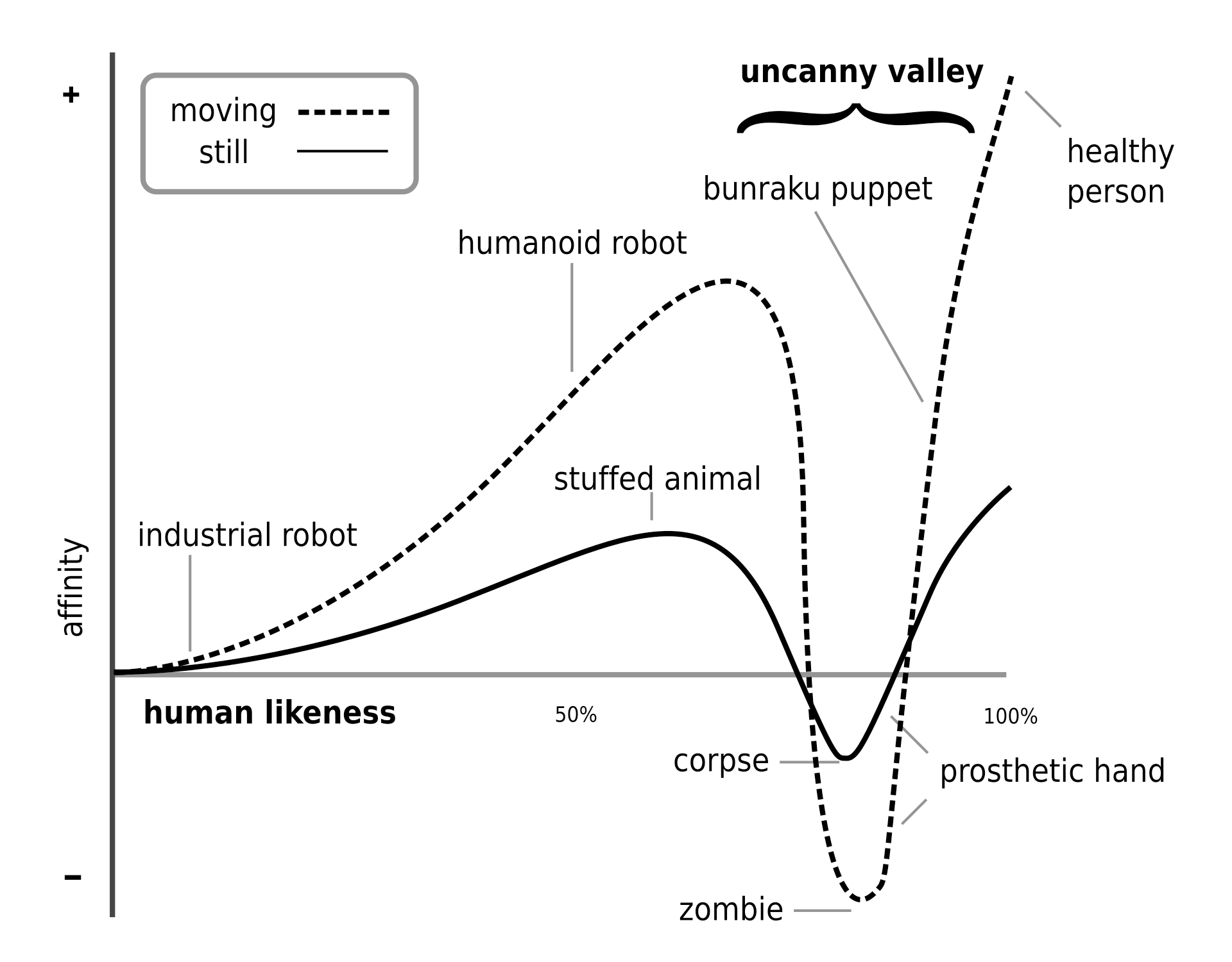

Most of you have probably seen the uncanny valley graph. It’s referenced all the time by game designers and digital animators — going a little cartoony is good in visual design, and this works even in the most lo-fi of ways.

Humans are somehow wired to project life and emotion onto things at the merest suggestion of personhood. In most cases, less is more. There’s a phenomenon called face pareidolia where we don’t just see faces in inanimate things — we immediately respond emotionally to them as well.

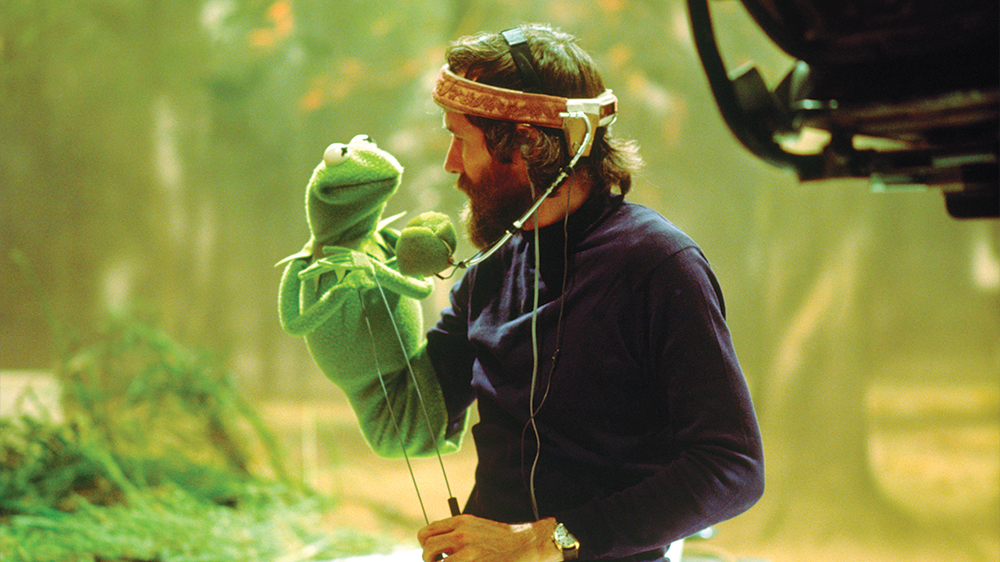

I think this is something artists understand innately — how to evoke emotion with a mere sketch, or a gesture, with the simplest materials. Kermit essentially is a sock with ping pong balls for eyes. But when a skilled puppeteer performs with him, everyone forgets about the hand inside, and feels that they’re talking to a very charismatic frog.

So, how do we create characters from the materials of AI that feel alive? Here are some things I’ve found that work.

1. MAKE THEM MEMORABLE

The legendary producer, and my friend and mentor Fred Seibert told me when I was first thinking about creating a cast of characters at Duolingo that what makes a character distinctive is “the way they look, the way they move, and the way they sound.” That’s it. Three things.

I got to work with a world-class art team to define how Duolingo’s characters looked and moved—to develop and train Duolingo’s AI voices, we spent hours with voice actors finding a way for each character to speak that was funny and memorable. For Lily, our emo girl, whose voice was trained (and voiced in our traditional animation) by the actor Melody Peng, we realized it was funniest when she said every line like her mom was forcing her to do it—immediately iconic.

2. THINK ABOUT ARCHETYPES

In storytelling, there are a number of types of characters that occur again and again. There are whole books about this, and sites like TV Tropes where you can really geek out.

One of my favorite archetypes is the tsundere, which people might know from Japanese anime. This is a character who starts out harsh and hostile, but warms to you over time as you earn their respect. Think about how much fun an AI modeled off this archetype could be — one that actually makes you work for its affection, instead of endlessly complimenting you.

3. USE SHORTHAND

In writing for movies and TV, we’ll make series bibles — which often include detailed writeups of characters’ backstories, motivations, flaws, hopes, and dreams. That’s great for a human writer. But feed that whole thing into a prompt, and you’ve just made all your token sizes huge and given it lots of complex instructions it can get wrong.

LLMs have tons of training data around pop culture and tropes, and will know of lots of characters, so you can say something like, “This character is like a 14-year-old emo version of April Ludgate from Parks and Rec,” and you’ll probably get dialogue that’s a good way there. Combine that with your character’s unique look, sound, and feel, and you’re off.

4. EMBRACE FLAWS

Limitations and flaws are endearing and relatable. R2-D2 and C3-PO were superhuman at things like interfacing and translating—but they also had major limitations, and annoying quirks. Nobody loved them in spite of their flaws; they loved them because of them.

It’s ALL the Milk

I’ll leave you with this. When I was first trying to understand generative AI, a PM at Duolingo named Zan Gilani said to me, “You know how when you drink milk, it’s not from one cow — it’s like, from ALL the cows?”

I’d never thought about milk that way, and honestly, I didn’t want to drink milk again for a while after that. But it’s a reminder: LLMs are just drawing from all the stuff we’ve made. There’s nothing actually unnatural about them. The soul is in there, if you can figure out how to draw it out.